March 30, 2007

March 25, 2007

Barcamp LA 3

more photos here

I am at Barcamp LA.

Despite my initial trepidation it's turning out to be pretty fun. I got to see some friends I hadn't seen in a long time: Richard (who gave a presentation on creating social context engines that was a lot more fun than you might have thought social context engines could be) and Kim, and Marc Brown.

It was also nice to meet an IRC chixxor in person, along with her hacker boyfriend. Britta and Doug and I tried diligently for a while to liveconsume all the liveblogging and twitterstreams and other media being generated by the conference as it happened, but it proved to be too much. Or at least too something.

Actually I think Britta could have taken any five of her links from del.icio.us and woven them together in a presentation that would have beat 90% of what we've seen here.

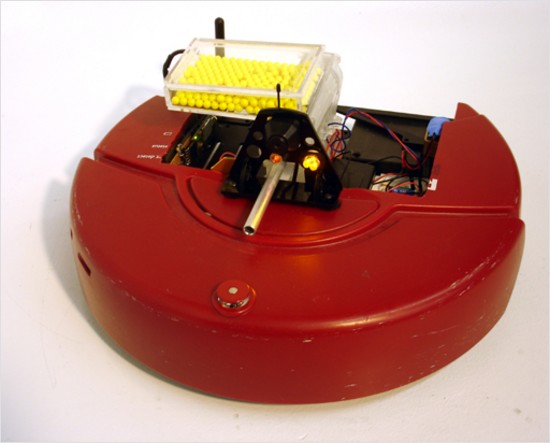

I toyed with the idea of giving a talk on creating conversational interfaces, but I ran out of time to put anything together. And then I realized that what I'd really like is to talk about how to build a sub-$1K UAV. Except I'd call it “HOWTO Build a Remotely Piloted Bioweapon Dispensing Vehicle On a Budget”.

March 23, 2007

March 22, 2007

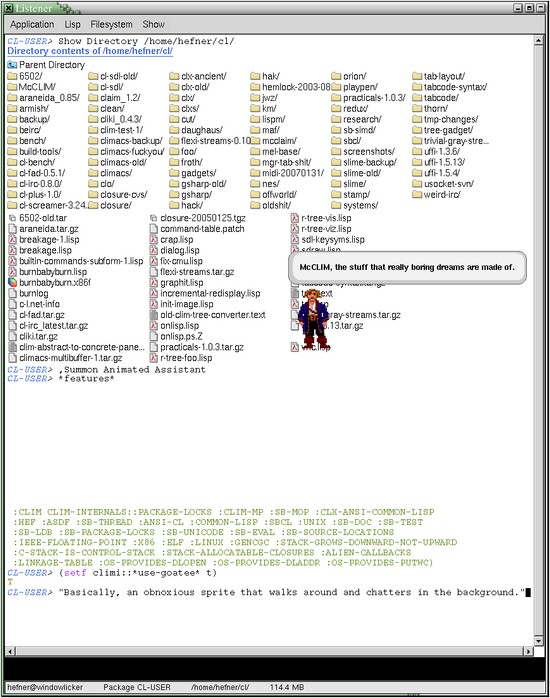

The Contemporary MCL Situation

Some recent favorites.

Brian Hayes on an MCL fan's modern existence:

If Franz Kafka were a Lisp programmer, he would be an MCL user -- waiting patiently by the outer gate of the castle, year after year, hoping for some word from on high -- if not an announcement then maybe at least a rumor, a hint, a sign. Now and then Kafka meets another faithful Digitool devotee; they commiserate, and compare notes. Are they fools to stand around waiting all this time? Are the Lords of Lisp up there in the turrets of the castle looking down at them and laughing? Or is the castle actually abandoned, vacant, falling to ruin? They fear the worst, but in the end they decide to wait a while more. After all these months and years, why lose hope now? 5.2 will be great when it gets here.

I gave up hope years ago.

March 21, 2007

Summer of Lisp 2007

Lisp NYC will be a Google Summer of Code mentoring organization again this year. A list of submitted proposals is available on their Summer of Lisp page (along with information on submitting your own idea—but hurry, the deadline is March 26).

Maybe we have to resign ourselves to the idea that every Summer of Lisp is going to include a non-portable sockets project, for now and forever.

March 20, 2007

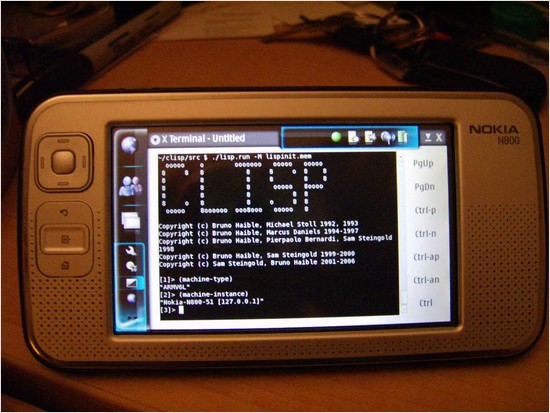

clisp on a Nokia N800

And that's not just an xterm to some other machine; check out the machine-type and machine-instance [From hefner on #lisp].

TwitterBuzz

Robert Synnott's TwitterBuzz, which shows the most popular URLs in twitter messages, is written in Common Lisp and powered by Hunchentoot.

(I found a hint that the site might be written in Lisp, and when I went to look at it I knew for sure. “Despite her creative brilliance, the average lisp hacker has yet to discover CSS.”)

March 19, 2007

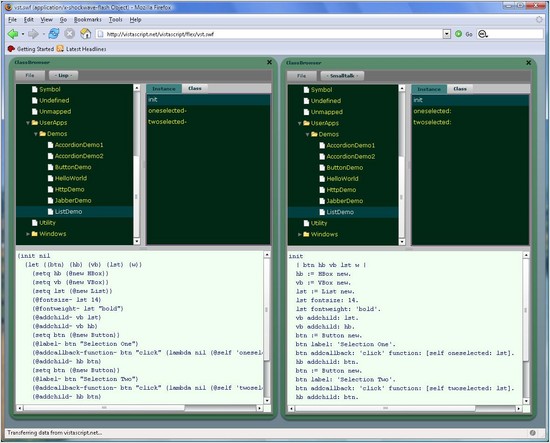

Vista Smalltalk: In Browser, Lisp Syntax

Vista Smalltalk for Flash is an implementation of Smalltalk written in ActionScript, which means it can run in your browser if you have a Flash plugin.

Source code is stored internally as s-expressions, and can be displayed with either Lisp or Smalltalk syntax.

March 16, 2007

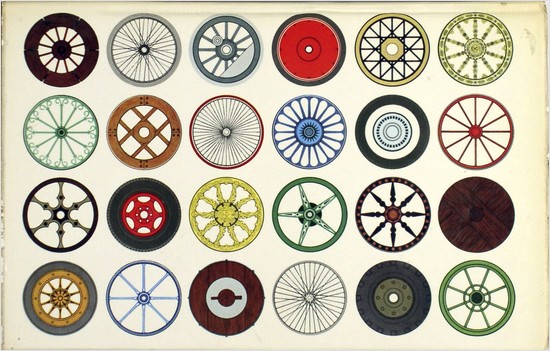

Cheap UAVs for Everybody

Chris Anderson wants to start a FIRST-style UAV competition, aiming for sub-$1K designs:

We're right on the verge of an era where it will be possible for regular people, not just engineers, to create home-built UAVs and guided rockets. So why not create a formal set of challenges so that innovative teams could advance the state of the art, just as the FIRST league has done for terrestrial robotics? (In fact, the Koreans already have such a competition...)

I love this idea, even if I don't think his Lego autopilot is very practical.

There are already some UAV competitions, but they all seem to be college-level, tens-of-thousands-of-dollar propositions.

March 09, 2007

Problems of Data Access Layers

Britt Ekland, who played John Cassavetes' wife in “Machine Gun McCain”, and was later a Bond girl.

A nice little summary of the issues involved in writing and using data access layers (DALs) on top of databases, by Will Hartung (I think I saw this on del.icio.us):

In Days Of Yore, with ISAM style databases, this was the typical paradigm for processing.

use OrderCustomerIndex Find Order 'CUST001' Fetch Order while(order.custcode = 'CUST001') use OrderDetailOrderIndex Find OrderDetail order.orderno Fetch OrderDetail while(orderdetail.orderno = order.orderno) use ItemItemCodeIndex Find Item orderdetail.itemcode Fetch Item ... Fetch Next OrderDetail end while Fetch Next Order end whileSo, if you had 10 orders with 5 lines items each, this code would "hit" the DB library 100 times (10 for each order, once for the OrderDetail and once for Item data).

When DB calls are "cheap", this kind of thing isn't that expensive (since every DBMS (Relational and Object) on the planet essentially has an ISAM (B-Tree) core, they do this kind of thing all day long).

So, if you wrote your code with a DAL, you could easily come up with something like this:

(defun process-orders (cust-code) (let ((orders (find-orders cust-code))) (dolist (order orders) (let ((details (find-order-details (order-no order)))) (dolist (detail details) (let ((item (find-item (detail-item-code detail)))) (do-stuff order detail item)))))))But this is a trap, and this is where THE problem occurs with most any relational mapping process. Naively implemented with SQL, again for 10 orders with 5 line items each, you end up with SIXTY(!!!) SQL hits to the database. (1 query for the initial orders, 1 query for each order for its detail, 1 query for each detail for the item data). This is Bad. Round trips to the DB are SLLOOWWW, and this will KILL your performance.

Instead, you want to suck in the entire graph with a single query:

SELECT orders.*, orderdetails.*, items.* FROM orders, orderdetails, items WHERE orders.custcode = ? AND orderdetails.orderno = orders.orderno AND items.itemcode = orderdetails.itemcodeThen, you use that to build your graph so you can do this:

(defun process-orders (cust-code) (let ((orders (find-orders cust-code))) (dolist (order orders) (let ((details (order-details order))) (dolist (detail details) (let ((item (detail-item detail))) (do-stuff order detail item)))))))And THAT's all well and good, but say you wanted to get the total of all canceled orders:

(defun canceled-orders-total (cust-code) (let ((orders (find-orders cust-code)) (total 0)) (dolist (order orders) (when (equal (order-status order) "C") (setf total (+ total (order-total order))))) total))This is fine, but you just sucked the bulk of ALL of the orders, including their detail and item information, to get at a simple header field.

Now, certainly this could have been done with a simple query as well:

SELECT sum(total) FROM orders WHERE status = 'C' AND custcode=?The point is that balancing the granularity of what your DAL does, and how it does it, needs to be determined up front, at least in a rough edged way. This is what I mean by being aware that an RDBMS is going to be your eventual persistent store. If you don't assume that, you may very well end up with a lot of code like the first example that will immediately suck as soon as you upgrade to the SQL database.

In my own days of yore (1997ish), I wrote a pretty complicated data access layer for a big Win32 application—in Visual Basic. The basic idea, which was Jim Firby's, was pretty neat, in that it made databases look almost like associative arrays.

Data was addressed with tuples, so ["books_by_isbn" "0140125051" "title"] would be the address (we called them “IGLs”, for Info Group Locator or something) of the "title" field of the record whose key field was “0140125051” in the "books_by_isbn" table. ["books_by_isbn" "0140125051" "*"] would be the collection of fields for book 0140125051, and ["books_by_isbn" "*" "*"] would be all books in the table.

There was a virtual translation layer, so if you wanted you could have a single "books" table that contained both ISBNs and ASINs, and then define virtual "books_by_isbn" and "books_by_asin" tables that would let you use IGLs that indexed by ISBN or ASIN respectively. It seems a little insane now, but actually every column of every table went through the virtual translation map, which was just another table in the database. What can I say, we were Lispers with an AI background, indirection was in our blood.

There was so much to the Datalink, as my DAL was called: 14,000 lines of shared caches, events, rules engines and schema migration support. But the result for users of the code was that complex database access patterns seemed simple and clean.

But of course we ran into the problem that some combinations of simple, clean Datalink operations would be fast and others would be slow and unless you knew a lot of the details of the Datalink implementation you couldn't easily predict which way it would go. Which is exactly the sort of trap mentioned by Will.

I ended up writing a series of tech notes on how to structure common operations with the Datalink to keep things fast. It was effective, in that users of the code could then get good performance, but it didn't feel like the most satisfying solution. But the problem seems to be that nobody knows how to come up with a satisfying solution.

March 05, 2007

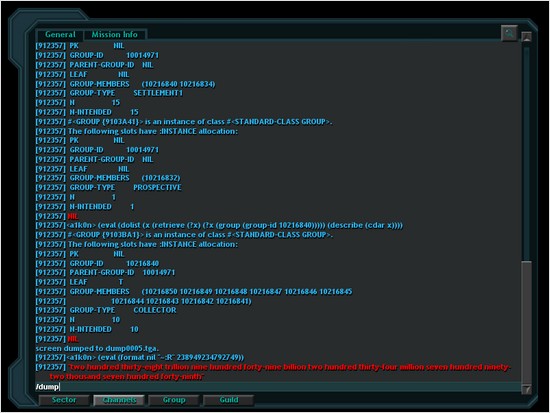

Vendetta Online

Vendetta Online, an MMORPG from Guild Software, has Lisp (SBCL) inside—for now [via LtU]. They're replacing the Lisp code with Erlang.

In the old days (12 years ago?) I think I used to chat about Lisp a bit with one of the Guild Software guys.

March 02, 2007

SMP, fork and ACL

Franz has a new article about “Simulating Symmetric Multi-Processing with fork()”, with a simple framework for distributing jobs across processes.

It'd be nice if it included a little context on the threading & SMP story.