January 30, 2007

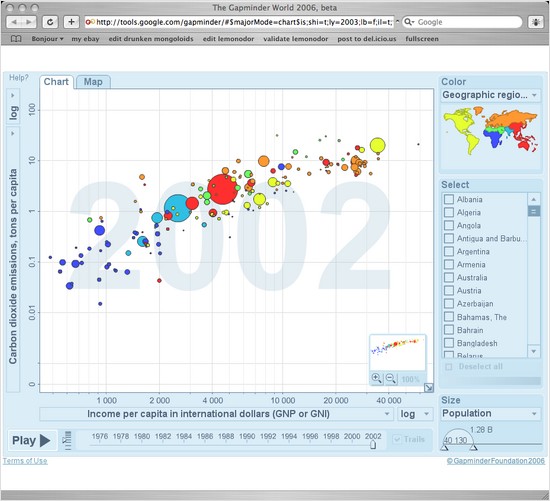

Gapminder

I don't remember where I first saw this wonderful Hans Rosling video from TED. By five minutes in I was totally hooked on his energetic, hands-on data visualization and kind of blown away by what he was saying about the developing world.

Now Google has released a Flash version of the program used to create those visualizations [via Dean Armstrong].

The mission of Gapminder, a non-profit founded by Hans Gosling, is to create software to help visualize human development.

January 28, 2007

Eggs

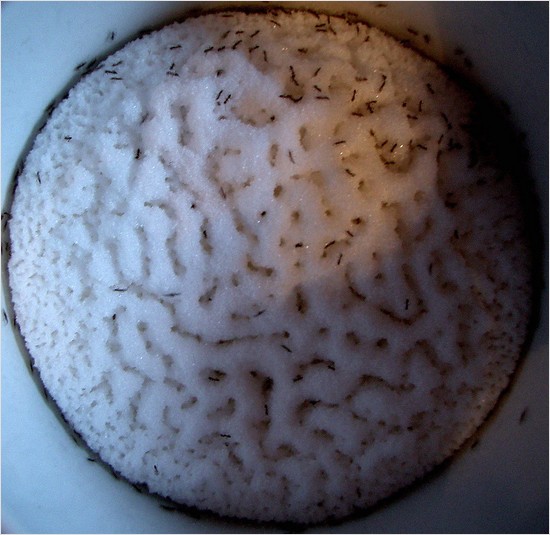

A few days ago I was at the supermarket looking at eggs. I realized I had no idea what Grade A meant, or what the meaning of any other grade was, or even what other grades there were. Maybe this is why I feel like I'm faking it when I buy eggs.

That happens sometimes, where I'm suddenly shocked to realize I lack even basic knowledge about some mundane aspect of life. Are there any differences between brown and white eggs, other than color? Is Henry Kissinger really a war criminal? Do drum brakes even have brake pads? Usually I pledge to myself that when I get home I will look up whatever it is on Wikipedia. Three minutes later I've forgotten about it forever.

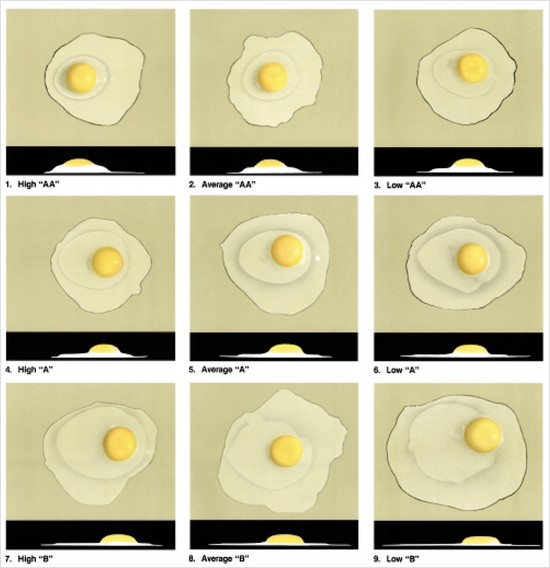

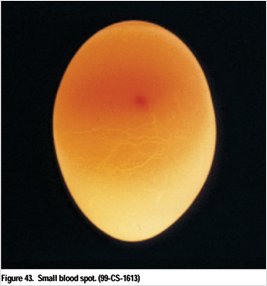

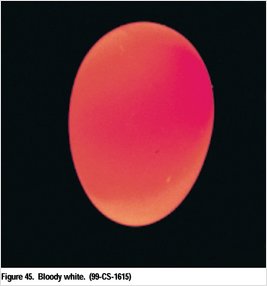

I'm glad I didn't forget about the egg thing, though, because the USDA Egg-Grading Manual is pretty cool. It describes a world of blood rings, blood spots, meat spots, cross-contamination, leakers and black rots. It has enlarged drawings of hen reproductive systems, photos of abnormally shaped eggs, an official air cell gauge, and a list of obsolete grades (anyone want a dozen “U.S. Dirties”?). Don't even get me started on the discussion of hand candling technique.

I think I've been a fan of catalogs of pathologies since I was a kid, reading through my dad's dermatology magazines. This USDA manual has almost the same style of photography and subject presentation that those journals had in the mid 70s. I like to imagine similar photographic grading guides for supermodels and web 2.0 sites.

OK then, so what is the scoop on brown eggs?

Shell color does not affect the quality of the egg and is not a factor in the U.S. standards and grades. Eggs are usually sorted for color and sold as either “whites” or “browns.” Eggs sell better when sorted and packed according to color than when sold as “mixed colors.”

For many years, consumers in some areas of the country have preferred white eggs, believing, perhaps, that the quality is better than that of brown eggs. In other areas, consumers have preferred brown eggs, believing they have greater food value. These opinions do not have any basis in fact, but it is recognized that brown eggs are more difficult to classify as to interior quality than are white eggs. It is also more difficult to detect small blood and/or meat spots in brown eggs. Research reports and random sample laying tests show that the incidence of meat spots is significantly higher in brown eggs than in white eggs.

Meat spot fans probably already knew that. And they probably know the significance of the color of a chicken's ear lobes.

January 26, 2007

Founders at Work

Founders at Work: Stories of Startups' Early Days, by Y Combinator founding partner Jessica Livingston, is finally out [via gavin].

It looks like the complete list of interviews is available at the book's website, along with the text of the Steve Wozniak interview.

Livingston: What is the key to excellence for an engineer?

Wozniak: You have to be very diligent. You have to check every little detail. You have to be so careful that you haven't left something out. You have to think harder and deeper than you normally would. It's hard with today's large, huge programs.

I was partly hardware and partly software, but, I'll tell you, I wrote an awful lot of software by hand (I still have the copies that are handwritten) and all of that went into the Apple II. Every byte that went into the Apple II, it had so many different mathematical routines, graphics routines, computer languages, emulators of other machines, ways to slip your code in and out of an emulation mode. It had all these kinds of things and not one bug ever found. Not one bug in the hardware, not one bug in the software. And you just can't find a product like that nowadays. But, you see, I had it so intense in my head, and the reason for that was largely because it was part of me. Everything in there had to be so important to me. This computer was me. And everything had to be as perfect as could be made. And I had a lot going against me because I didn't have a computer to compile my code, my software.

New Job at Energid

I have a new job. For about the past nine months I've been doing contract work for Energid, but starting this week I'm an official full-time employee there.

My initial focus will be on developing natural language dialog systems, but they've got a variety of projects going on. I'm also excited to have the chance to help chart a course in terms of new projects.

Robot Armies

This is pretty much where I grew up.

The February issue of Harpers magazine has an article on unmanned military vehicles, “The Coming Robot Army” (not yet available online):

At $145 billion, the Army's Future Combat Systems (FCS) is the costliest weapons program in history, and in some ways the most visionary as well.

[...]

FCS calls for seven new unmanned systems. It's not clear how much autonomy each system will be allowed. According to Unmanned Effects (UFX): Taking the Human Out of the Loop, a 2003 study commissioned by the U.S. Joint Forces Command, advances in artificial intelligence and automatic target recognition will give robots the ability to hunt down and kill the enemy with limited human supervision by 2015. As the study's title suggests, humans are the weakest link in the robot's “kill chain”—the sequence of events that occurs from the moment an enemy target is detected to its destruction.

[...]

Through the weeds I spotted the SWORDS robot squatting in the dust. My heart skipped a beat. The machine gun was pointed straight at me. I'd watched Sgt. Mero deactivate SWORDS. I saw him disconnect the cables. And the machine gun's feed tray was empty. There wasn't the slightest chance of a misfire. My fear was irrational, but I still made a wide circle around the robot when it was time to leave.

I wish I could get hold of a copy of the Boeing promotional video the article mentions, starring Richard Dean Anderson as a UAV- and UGV-wielding commander trying to get his troops across a bridge.

The article also mentions the shortage of satellite bandwidth for UAVs as potential pressure for greater vehicle autonomy. GlobalSecurity.org claims that a single Global Hawk can require 500 Mbs—which is 5x the total bandwidth available to the entire U.S. military during the first Gulf War. If a vehicle can analyze its high resolution multi-spectral imagery onboard, decide what to do and then do it with less controller intervention, you reduce the necessary bandwidth and make it possible to operate more vehicles simultaneously.

“Raptors, Robots, and Rods from God: The Nightmare Weaponry of Our Future”, from Z Magazine, has a bit on the economics behind FCS.

January 19, 2007

USGS Earthquake Data from Lisp

Kevin Layer has a fun little article in Franz' Tech Corner about processing U.S. Geological Survey earthquake data with ACL.

cl-user(8): (get-quake-info :period :week :larger-than nil :within 1.0 :reference-location (place-to-location "555 12th St, Oakland, CA, 94607, US")) ;; Downloading data...done. January 09, 2007 22:33:42 GMT: Hollister, California: magnitude=3.1 January 09, 2007 22:16:21 GMT: Los Altos, California: magnitude=2.0 January 09, 2007 21:39:52 GMT: Hollister, California: magnitude=1.3 January 09, 2007 16:56:54 GMT: Aromas, California: magnitude=1.4 January 09, 2007 13:20:02 GMT: Cobb, California: magnitude=1.4 January 09, 2007 11:52:11 GMT: Hayward, California: magnitude=1.2 January 09, 2007 03:01:03 GMT: El Cerrito, California: magnitude=1.9 January 08, 2007 11:30:55 GMT: San Jose, California: magnitude=2.0 January 07, 2007 14:22:56 GMT: Walnut Creek, California: magnitude=1.2 January 07, 2007 09:20:38 GMT: Kenwood, California: magnitude=3.1 January 06, 2007 12:58:01 GMT: Yountville, California: magnitude=1.8 January 06, 2007 07:04:01 GMT: Oakland, California: magnitude=2.4 January 05, 2007 22:55:53 GMT: Walnut Creek, California: magnitude=1.3 January 05, 2007 20:18:51 GMT: Livermore, California: magnitude=2.4 January 05, 2007 17:54:17 GMT: Ladera, California: magnitude=1.1 January 05, 2007 11:09:43 GMT: Cobb, California: magnitude=2.4 January 05, 2007 10:40:26 GMT: Concord, California: magnitude=1.3 January 04, 2007 20:38:03 GMT: Los Altos, California: magnitude=1.7 January 04, 2007 20:21:41 GMT: Aromas, California: magnitude=2.6 January 04, 2007 13:42:36 GMT: Palo Alto, California: magnitude=1.2 January 04, 2007 03:42:10 GMT: Oakland, California: magnitude=1.9 nil

Unfortunately ACL 8.0 Express' heap limit prevented the code from compiling for me. It does some compile-time processing of ZIP code data, and while Kevin's comments mention that the code uses 1/10 as much memory as his first version it's still too much for the free version of ACL.

So for your convenience, this patch modifies the code to do the ZIP code processing at load-time: usgs-acl-express.patch

Once I got it working, I wanted to modify the output a bit to look more like the USGS output, which gives the offset direction and distance of the epicenter from the nearest city:

MAG DATE LOCAL-TIME LAT LON DEPTH LOCATION

y/m/d h:m:s deg deg km

1.5 2007/01/19 16:54:03 34.187N 117.496W 6.7 9 km ( 6 mi) WSW of Devore, CA

1.7 2007/01/18 13:37:46 33.823N 117.517W 0.0 7 km ( 4 mi) SE of Corona, CA

1.9 2007/01/17 16:08:31 34.320N 118.537W 9.5 6 km ( 4 mi) NNW of Granada Hills, CA

1.3 2007/01/17 15:42:23 33.978N 117.160W 17.5 9 km ( 5 mi) NE of Moreno Valley, CA

1.5 2007/01/17 14:48:04 34.053N 117.260W 17.5 1 km ( 1 mi) WNW of Loma Linda, CA

Kevin's code already figures out the city closest to the quake, so I just pass that to his place-to-location function to get the latitude and longitude of the city from Google (there was one glitch in that the ZIP code data he uses to figure out the nearest city includes some entries that are not actually ZIP codes and don't correspond to any city; I modified the code to not return those). I don't know if Google's city-level geocoding returns the coordinates of the center of mass or what, but testing with Google Maps give some evidence that it returns some basically central point. From that I use distance-between to get distance in miles.

To get the direction of the epicenter from the reference city, I first calculate the bearing from the city to the quake using a formula from Ed Williams' Aviation Formulary:

(defun bearing-to (location1 location2)

(flet ((radians (deg) (* deg (/ pi 180.0)))

(degrees (rad) (* rad (/ 180.0 pi))))

(let ((lat1 (radians (location-latitude location1)))

(lon1 (radians (location-longitude location1)))

(lat2 (radians (location-latitude location2)))

(lon2 (radians (location-longitude location2))))

(degrees (mod (atan (* (sin (- lon2 lon1)) (cos lat2))

(- (* (cos lat1) (sin lat2))

(* (sin lat1) (cos lat2) (cos (- lon2 lon1)))))

(* 2 pi))))))

Then I find the nearest cardinal direction to that bearing:

(defparameter *directions*

'(("N" . 0)

("NE" . 45)

("E" . 90)

("SE" . 135)

("S" . 180)

("SW" . 225)

("W" . 270)

("NW" . 315)))

(defun bearing-to-direction (bearing)

(let ((best nil)

(best-diff 0))

(dolist (dir *directions*)

(let ((direction (car dir))

(heading (cdr dir)))

(when (or (null best)

(< (abs (- bearing heading)) best-diff))

(setf best direction)

(setf best-diff (abs (- bearing heading))))))

best))

The final result:

CL-USER> (get-quake-info :period :week :larger-than nil :within 1.0 :reference-location (place-to-location "los angeles, ca, us")) ;; Downloading data...done. January 18, 2007 21:37:46 GMT: 4.6 miles SE of Corona, California: magnitude=1.7 January 18, 2007 00:25:25 GMT: 3.5 miles NW of Boron, California: magnitude=1.5 January 18, 2007 00:08:31 GMT: 3.2 miles N of Porter Ranch, California: magnitude=1.9 January 17, 2007 22:48:04 GMT: 0.3 miles N of Loma Linda, California: magnitude=1.5 January 17, 2007 17:36:43 GMT: 5.9 miles E of Gorman, California: magnitude=1.3 January 17, 2007 00:38:13 GMT: 3.0 miles NW of Boron, California: magnitude=2.2 January 16, 2007 19:57:58 GMT: 6.7 miles N of Santa Paula, California: magnitude=2.1 January 16, 2007 00:47:12 GMT: 3.1 miles NW of Boron, California: magnitude=1.7 January 15, 2007 01:08:47 GMT: 3.2 miles NW of Boron, California: magnitude=1.7 January 14, 2007 19:49:13 GMT: 12.4 miles E of Anaheim, California: magnitude=1.4 January 14, 2007 14:50:31 GMT: 17.1 miles NW of Frazier Park, California: magnitude=1.4 January 14, 2007 09:06:07 GMT: 6.2 miles N of Crestline, California: magnitude=1.7 January 13, 2007 21:01:04 GMT: 6.8 miles SW of Huntington Beach, California: magnitude=2.1 January 13, 2007 20:57:40 GMT: 1.3 miles NW of Newport Beach, California: magnitude=1.9 January 13, 2007 18:43:57 GMT: 6.8 miles N of Piru, California: magnitude=1.7 January 13, 2007 16:31:55 GMT: 4.8 miles E of Yorba Linda, California: magnitude=1.6 January 13, 2007 06:39:57 GMT: 1.0 miles NW of Loma Linda, California: magnitude=2.1 NIL

It looks basically right, but when you compare to even just the first few USGS results there are some differences.

- Devore, CA is about 60 miles east of LA, which probably puts it outside the 1.0 degrees bound, longitude-wise.

- The magnitude 1.7 quake near Corona seems to match up.

- My code has a 1.5 quake near Boron that doesn't seem to match anything on the USGS website.

- The USGS has the magnitude 1.9 quake on 1/18/2007 4 miles north of Granada Hills while my code has it as 3.2 miles north of Porter Ranch; Luckily Granada Hills and Porter Ranch seem to be right next to one another.

- The 1.5 quake near Loma Linda matches, though the USGS calls it 1 mile WNW while I have it as 0.3 miles north.

I am satisfied by this level of consistency.

This patch adds the additional code and includes the ACL 8.0 Express patch: usgs-enhanced.patch.

January 17, 2007

LaneHawk Rollout With Shoppers

LaneHawk has a real, official, production rollout. It will be going into six Shoppers Food & Pharmacy stores, probably out east.

“BOB [bottom of basket] shrink is a problem grocery retailers have tried to combat for years, with pretty limited results,” said Kurt Schertle, Senior Vice President of Merchandising for Shoppers. “We have been testing LaneHawk extensively, and are very impressed by the results. LaneHawk is the first product we have found that actually works in reducing BOB loss.”

Six stores isn't a huge deployment, but I think it's neat that a descendant of something I first prototyped almost three years ago is now in the wild.

Speaking of Idealab, I hadn't seen this 2005 profile of Bill Gross from Fortune: “Idealab Reloaded: Surprise! Ex-dot-com-wizard Bill Gross is back.” “The entire $8 billion to $10 billion market we call search today is more or less all based on Bill’s idea for GoTo.com.” (That's the GoTo.com that bought a Lisp company for $250 million.)

January 13, 2007

Lisp Job Surge

Lispjobs has listed 7 positions at 5 different companies in the last 3 days [via est].

January 11, 2007

BLDGBLOG at CLUI

The Center for Land Use Interpretation is hosting a BLDGBLG event this Saturday. Matt Coolidge (CLUI), Mary-Ann Ray, Robert Sumrell, Christine Wertheim (IFF), and Margaret Wertheim (IFF) will be speaking.

January 10, 2007

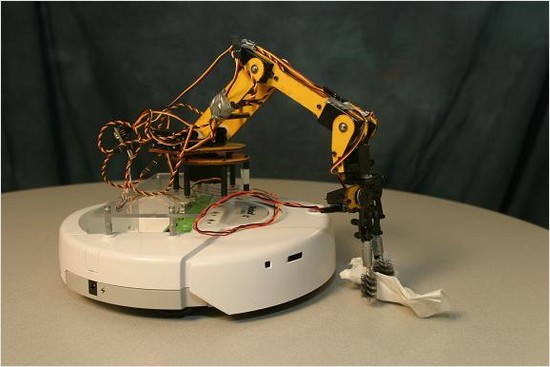

iRobot Create Released

iRobot has released a version of the Roomba platform aimed at hobbyists, developers and researchers [via Ars Technica]. The iRobot “Create” (what an awkward name) seems to be a Roomba without the vacuuming bits, and with a payload bay and expansion port. If you don't want to use the serial port or hack your own hardware onto the expansion port, you can buy the iRobot Command Module, with an 8-bit, 20 MHz ATMega 168 microcontroller for straightforward onboard control.

There's a Create project gallery but at this point it's not much more than tiny photos of modded Creates. Some of the examples make me wonder just how much payload these things can take and still move—this may be our best shot at Roomba Cinema.

The base model is $129. If you're interested in doing some swarming with robust, proven hardware, the 10-pack for $999 seems like a great deal.

I'm also very curious about the Robotics Primer Workbook (companion to The Robotics Primer, by Maja J Mataric´ and soon? to be published by MIT Press). It looks like it's going to be an open source guide to some advanced robot programming topics, focusing on the Create with the iRobot Command Module or a gumstix-based controller (and some mention of the Microsoft Robotics Studio).

99 Problems But malloc Ain't One

Joao Meidanis has a list of “Ninety-nine Lisp Problems” that might be a nice way to learn some Lisp ways of thinking.

- P10 (*) Run-length encoding of a list.

- Use the result of problem P09 to implement the so-called run-length encoding data compression method. Consecutive duplicates of elements are encoded as lists (N E) where N is the number of duplicates of the element E.

Example:

* (encode '(a a a a b c c a a d e e e e))

((4 A) (1 B) (2 C) (2 A) (1 D)(4 E))

[...]

- P34 (**) Calculate Euler's totient function phi(m).

- Euler's so-called totient function phi(m) is defined as the number of positive integers r (1 <= r < m) that are coprime to m.

Example: m = 10: r = 1,3,7,9; thus phi(m) = 4. Note the special case: phi(1) = 1.

* (totient-phi 10)

4Find out what the value of phi(m) is if m is a prime number. Euler's totient function plays an important role in one of the most widely used public key cryptography methods (RSA). In this exercise you should use the most primitive method to calculate this function (there are smarter ways that we shall discuss later).

The list is based on Werner Hett's Ninety-Nine Prolog Problems (link is to the Wayback Machine version; the original seems to be dead), and so has that Prologian emphasis on logic (and I can't help but think of Joyce's quote, “If Programming 101 classes started with social software rather than math problems and competitive games, more women might discover an unexpected interest”). Joao's Lisp translation is incomplete, with solutions provided for only the first few problems and quite a bit of Prolog phrasing remaining.

I wonder what “Ninety-Nine Unix Problems” would look like. Or “Ninety-Nine Regex Problems” (hold me, I'm scared).

I feel like I'm taking a big risk with the Jay-Z reference, but I'm living dangerously these days.

January 09, 2007

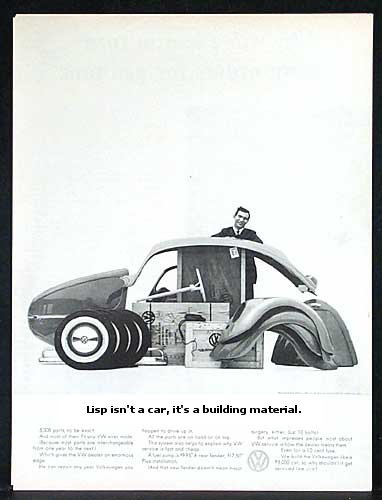

The Volkswagen Lisp

Damien Katz has a cute, sweet post about the Volkswagen Lisp, “the most configurable and powerful car in automotive history.” [via anarchaia.]

January 05, 2007

Hog Zero One, Gunslinger Has You Loud and Clear

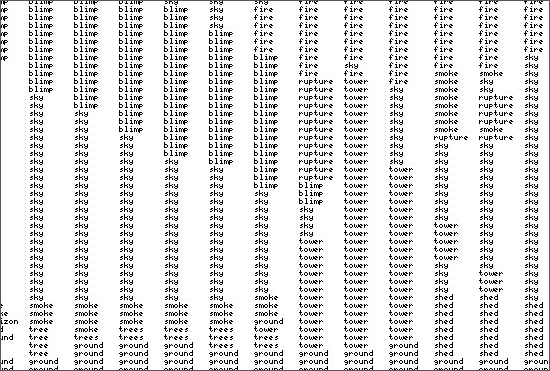

The Training & Simulation Journal has a short article about a project I've been working on lately: “PC-based JTAC sim will train Air Force airstrike controllers”.

In many ways, the JTAC [joint terminal attack controller] sim will resemble a first-person-shooter video game. The user will see a simulated battlefield. He’ll have an array of virtual tools, such as binoculars, a laser designator and Global Positioning System gear. It’s how the JTAC will direct aircraft that makes this sim different. The interface is almost totally voice-driven. The user directs the computer-controlled pilots using the same commands given to real pilots. For extra authenticity and convenience, users can plug the headsets from their real-life communications gear into their laptops.

The virtual pilot first checks in with the JTAC, who describes the target to the pilot. The computer pilot reads back the information, and then the JTAC uses commands to direct the simulated pilot’s eyes to the target. This requires a voice-recognition system clear enough to recognize the commands and artificial intelligence (AI) sophisticated enough to direct the virtual pilot in response to those commands.

I've primarily been working on the natural language dialog aspect of the system, the most challenging part of which might be where “the JTAC uses commands to direct the simulated pilot’s eyes to the target.”

The problem is that the pilot is flying high and fast and can see a huge amount of territory all at once, and the JTAC needs to be able to direct the pilot's vision to a very particular location. He typically does that by referencing landmarks within a visual context that is at least partially shared between the two, i.e. they're both looking at basically the same region but from very different perspectives, so the JTAC needs to choose landmarks that will be obvious from the air. And then there are many strategies for making use of those landmarks to guide the pilot's vision—e.g., defining a unit of measure based on the extent of some geographic feature and doing a kind of dead reckoning from an anchor point (“Go two units of measure to the west of the radio tower and then one north.”) or an iterative refinement (“In the northwest corner of the city there's a river, and there's a bridge going over the river, and there's a tank on the west side of the bridge.”).

It's an interesting challenge.