August 29, 2007

JackBot for Sale

Another piece of DARPA Grand Challenge history is for sale. MonsterMoto's Jackbot, which was able to venture 7 miles into the desert in the 2005 Grand Challenge, is looking for a new home:

Everything in the foreground of attached photo is for sale, lease or hire--Or maybe trade for an opportunity to earn an advanced degree.

Description:

1) Unit on the right: "JackBot" DGC 2005 Finalist. Lots of goodies, but would prefer not to part out.

2) Unit on the left: Me. Unfortunately, cannot be parted out. Must be fed daily. Will Travel.

3) Not visible: Lab, test site, spares, prototypes, computers, etc, etc.

Excluded by rule from the 2007 Urban Challenge. So, since the DGC2005, we've been focussing on multi-human, multi-UXV teaming and coordination, system enhancements, software cleanup, etc. Website is out of date but here : www.monstermoto.com/jackbot

Seeking an opportunity to continue our work (about 16,000 hrs so far!) within a quality, funded setting. In addition to business opportunities, an academic or research setting would be welcome.

Thank you in advance for any ideas or pointers,

MonsterMoto

monstermoto@yahoo.com

August 28, 2007

Amateur UAV Passion and Disappointment

Pict'Earth (man I don't like that name) is doing photo stitching and georeferencing of photos from their cheap UAV into Google Earth.

I think they imply (see the comment from David Riallant) that they can do this in realtime during the flight, but that for this video they processed the images offline to remove some of the low quality ones. They have a KML file for Google Earth, but either it doesn't really contain much or I just couldn't figure it out.

Pict'Earth's Todd Sipala describes why he finds first person video on RC planes compelling:

You put on the goggles, take a deep breath, and accelerate down the runway, springing into the air on a dead-calm Sunday morning. Everything seems to work, no video noise... check, uplink reponds nicely... check, DV camcorder is recording... check. Climb up to 100m or so, find some bearings, and set out for a speck in the distance... 3km is the target for today. Google earth satellite images have been memorized, here goes....

Chris Anderson recently organized an amateur UAV fly-in at the Alameda Naval Air Station. The fly-in was for a PBS documentary: “Since this was done mostly for the cameras (which can't tell the difference between autonomous and RC flight), we didn't really push the UAV envelope very far.” I'm a little surprised that I am disappointed in the attitude that since the cameras can't tell if an RC plane is autonomous or not, it's OK to shoot video of planes being remotely piloted and present it as though they were autonomous.

Less surprising is my disappointment with Chris' attitude toward the regulatory issues with hobbyist UAVs as evidenced in the RC Groups thread. I know he realizes that the FAA may not be happy with what he's doing, and I thought he was working for change on that front, but some of his answers in the thread are a bit evasive and obtuse.

I'm most disappointed by the conflict Chris felt when Amir, a 17-year-old Iranian kid (who commented in Chris' blog), showed off his UAV (also on Ask Slashdot). After all that disappointment, I was glad to see many of the commenters on Chris' blog and several rcgroups.com members being supportive of Amir.

August 21, 2007

BYU MAGICC Video

I love this video from Brigham Young University's MAGICC lab [via RC Groups].

They show UAVs doing laser range finder-based obstacle avoidance, optical flow-based canyon following, formation flying, cooperative timing including simultaneous arrival (and a successful recovery from a midair collision). There are even some bloopers.

The MAGICC site has lots more videos, though I couldn't find a high resolution version of the YouTubed highlight reel.

August 19, 2007

Csikszentmihályi Again: Engineering Politics

Chris Csikszentmihályi warns about killer robots in yet another article, this time in Good magazine: “Engineering Politics: What killer robots say about the state of social activism”:

Among the many changes in U.S. policy after 9/11 was one that went unnoticed by everyone except a few geeks: The military quietly reversed its longstanding position on the role of robots in battlefields, and now embraces the idea of autonomous killing machines. There was no outcry from the academics who study robotics—indeed, with few exceptions they lined up to help, developing new technologies for intelligent navigation, locomotion, and coordination. At my own institute, an enormous space is being out-fitted to coordinate robotic flying, swimming, and marching units in preparation for some future Normandy.

Yes, I'm fascinated with the speed with which the military robot has assumed a significant role in actually fighting wars, its potential for soon playing a revolutionary role, and the relatively small amount of public discourse on that potential revolution. And that's why I'm glad to see Chris Csikszentmihályi writing these articles.

But I don't get the weirdly literal reference to robots turning against their creators or the out-of-left-field positioning of open source as the savior that will prevent us from creating killer robots in the first place.

August 13, 2007

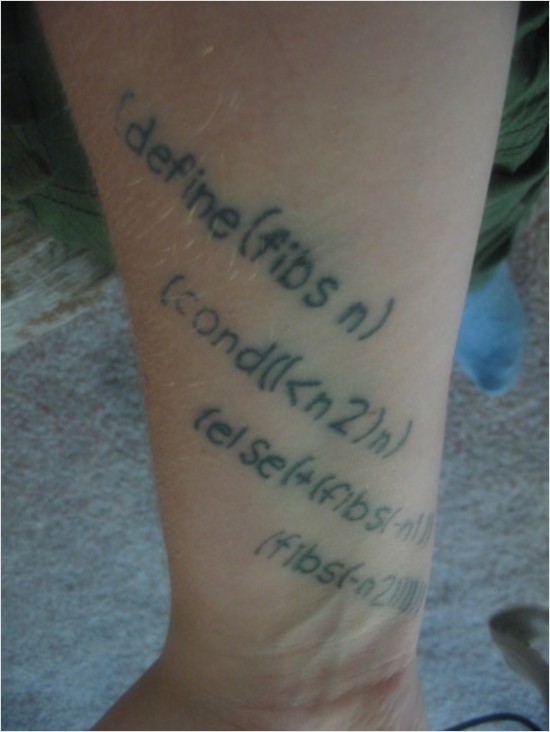

OpenGenera VLM Warez Torrent

A post on programming.reddit.com links to a torrent of an OpenGenera Virtual Lisp Machine, which can be run on Linux [via Anarchaia].

It's got 35 seeders and 10 leechers at the moment. And downloading it is illegal.

August 09, 2007

Urban Challenge Semi-Finalists and Contest Site Announced

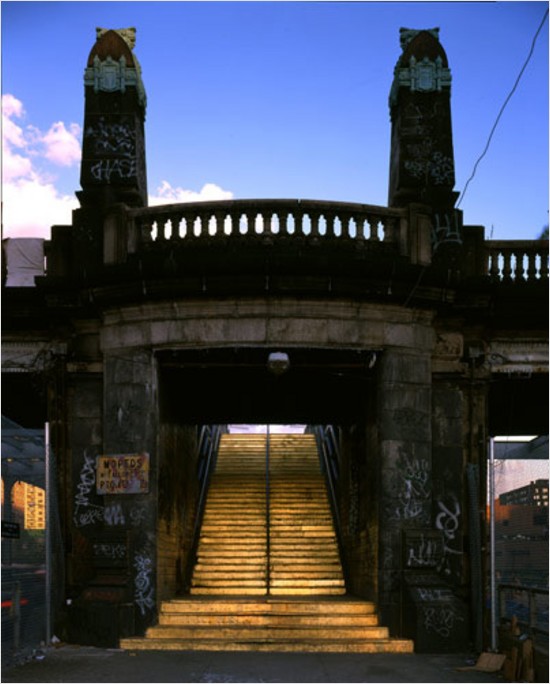

Looks like this will be the site of the challenge.

Google Maps link.

DARPA has announced the 36 semi-finalists for this year's Urban Challenge.

DARPA also announced that both the Urban Challenge NQE and final event will take place at the urban military training facility located on the former George Air Force Base in Victorville, Calif. DARPA selected the location because its network of urban roads best simulate the type of terrain American forces operate in when deployed overseas. “The robotic vehicles will conduct simulated military supply missions at the site. This adds many of the elements these vehicles would face in operational environments,” explained Dr. Tether.

The Victorville site is currently used by the U.S. Army to train for urban operations. As soon as the Army finishes their training rotation, DARPA will conduct clean-up operations to ready the site for the competition. DARPA emphasized that as of today’s announcement, the site is closed until team arrival on October 24. Photographs of the site and more information about the event are available at www.darpa.mil/grandchallenge.

At the NQE and the final event, the robots must operate entirely autonomously, without human intervention, and obey California traffic laws while performing maneuvers such as merging into moving traffic, navigating traffic circles, and avoiding moving obstacles. Dr. Tether noted, “The vehicles must perform as well as someone with a California Driver’s License.”

DARPA conducted competitive site visits across the United States to select the semi-finalists. Dr. Tether told attendees at DARPATech that he was at a site visit and was surprised how well the team’s autonomous vehicle made it through an intersection with other cars, just as if there was a human driver in the vehicle. “The depth and quality of this year’s field of competitors is a testimony to how far the technology has advanced since the first Grand Challenge in 2004. DARPA thanks all the contestants for their hard work and dedication and congratulates the teams selected as semi-finalists,” Dr. Tether said.

To qualify for winning, the robo-cars will have to complete a 60-mile fuel resupply mission in under six hours—avoiding moving obstacles, merging into oncoming traffic, and obeying California's driving laws. But the eventual $2 million victor will be picked by a panel of judges—not judged strictly on time.

At today's DARPATech conference, agency director Tony Tether said that prize is “in danger of being passed out.” He recently saw a robot approach an intersection, he said. Then the driverless vehicle waited for other cars to pass, flipped on its turning signal, and drove away.

Update: Ooh, I coincidentally ran across this description of the announced Urban Challenge site: “Note that this area is full of abandoned housing that is host to squatters, gangs, and other marginal people. It is extremely dangerous, and you should not visit this area unless you are prepared to handle these kinds of people.”

August 06, 2007

August 03, 2007

Moon Audio/Visual

Yesterday I wondered what would happen if I stored the raw data from a pixmap image to a WAV file, converted that file to an MP3, then back to a WAV, then finally reconstituted it as a pixmap.

Here's the original photo I used (I can't remember what site I got this from, unfortunately [see update below]):

You can hear what this image sounds like (16 khz sample rate).

It turns out the answer is that there's no noticeable difference after one conversion cycle. In order to see any real difference I had to do multiple generations of mp3 compression—all done at a constant 96kbps. (To hear the audio artifacting that comes from repeated mp3 compression, check here.)

Here's the result after 20 generations:

Gavin pointed out that it mostly just lost contrast. If you renormalize the histogram, this is what you get:

Now I want to see the result of compressing audio with JPEG, but I wouldn't be able to stand the disappointment if I spent a couple hours doing it myself and then discovered that again there was no interesting difference. So I guess that means it's your turn to contribute something for once.

Update: The photo is by my buddy Christopher Trott.

Update: There's more discussion on programming.reddit.com.

SWORDS

An armed TALON robot patrols a convention center defended by non-lethal “dazzler” carpet.

Three SWORDS TALON teleoperated robots have become the first armed land robots in a war zone.

The SWORDS—modified versions of bomb-disposal robots used throughout Iraq—were first declared ready for duty back in 2004. But concerns about safety kept the robots from being sent over the the battlefield. The machines had a tendency to spin out of control from time to time. That was an annoyance during ordnance-handling missions; no one wanted to contemplate the consequences during a firefight.

So the radio-controlled robots were retooled, for greater safety. In the past, weak signals would keep the robots from getting orders for as much as eight seconds—a significant lag during combat. Now, the SWORDS won't act on a command, unless it's received right away. A three-part arming process—with both physical and electronic safeties—is required before firing. Most importantly, the machines now come with kill switches, in case there's any odd behavior. “So now we can kill the unit if it goes crazy,” Zecca says.

Oh man.