September 28, 2005

Grand Challenge Gearing Up

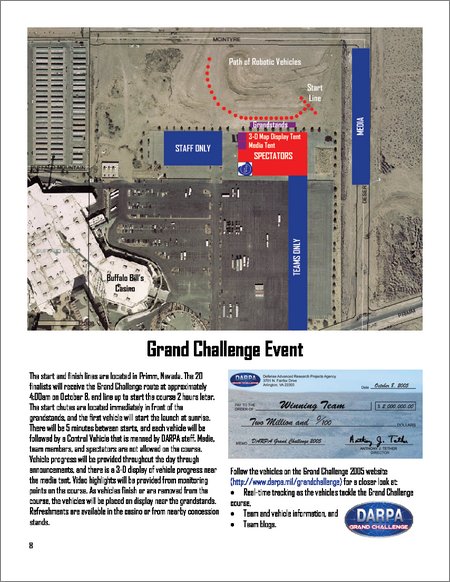

Today the Grand Challenge “National Qualifying Event” began, at the California Speedway in Fontana. The qualifying trials will last for the next seven days, after which the 20 teams that make it will travel to Primm, NV. Then on Saturday at about 4:00 AM DARPA will give the teams the GPS waypoints for this year's route, and at 6:30 AM the first vehicle will begin the race.

One guy who was at the speedway today and saw a few of the trial runs said “ Not only are we going to have a winner on the GC, but I predict many vehicles will finish.” Maybe I'll drive to Fontana tomorrow.

DARPA has a nice brochure (1 MB PDF) with annotated satellite images and everything.

The grandchallenge.org site has what looks like a new RSS feed aggregating GC team weblogs.

September 27, 2005

Predators Band Together

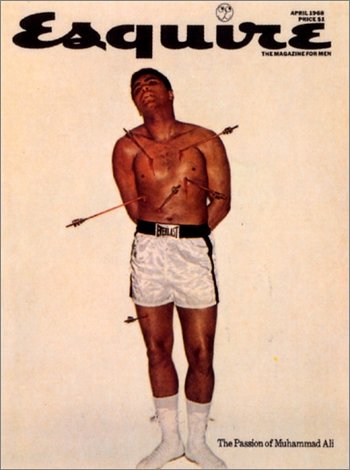

Muhammad Ali as a martyr, George Lois, 1968

It seems like this is all happening pretty quickly: “Predators fly first ever four-ship sorties”.

During these sorties, members from the 53rd Test and Evaluation Group, Detachment 4, tested the MAC Ground Control Station on its ability to enable a single pilot to simultaneously control four Predator aircraft over the skies of southern Nevada.

“Our pilots were impressed with the technology integration, human-machine interface and situational awareness provided by the MAC GCS,” said Lt. Col. Steven Tanner, 53rd TEG Det. 4 commander. “We spent six months developing comprehensive training and safety plans to ensure that these initial MAC four-ship test sorties were successful. Once we fully train our pilots and sensor operators on this new technology, we will initiate the process of evaluating the operational capabilities of the MAC system.”

It totally sounds like we're living in the montage describing the rise of SkyNet.

September 23, 2005

Scheme Is Love

Don Box, in MSDN magazine no less, declares “Scheme is Love”.

All the cool languages have lambda these days. Lisp's lambda is nicer than many, but maybe he should have demonstrated macros instead. And focused on Common Lisp. But who am I to tell someone else what to love?

More Smoke

LA Times photo

Last night Vandenberg's launch of a DARPA satellite caused everyone in LA to stop their cars and bust out the cameras. I wish I had seen it; the launch of a Minuteman III I saw a few years ago profoundly freaked me out for the period during which I had no working hypothesis to explain the phenomenon.

September 22, 2005

Three UAV Firsts

All via Unmanned Aerial Vehicles.

An Aerosonde UAV taking off

Hurricane researchers at the NOAA Atlantic Oceanographic and Meteorological Laboratory in Miami, Fla., marked a new milestone in hurricane observation as the first unmanned aircraft touched down after a 10-hour mission into Tropical Storm Ophelia, which lost its hurricane strength Thursday night. The aircraft, known as an Aerosonde, provided the first-ever detailed observations of the near-surface, high wind hurricane environment, an area often too dangerous for NOAA and U.S. Air Force Reserve manned aircraft to observe directly.

“It's been a long road to get to this point, but it was well worth the wait,” said Joe Cione, NOAA hurricane researcher at AOML and the lead scientist on this project. “If we want to improve future forecasts of hurricane intensity change we will need to get continuous low-level observations near the air-sea interface on a regular basis, but manned flights near the surface of the ocean are risky. Remote unmanned aircraft such as the Aerosonde are the only way. Today we saw what hopefully will become 'routine' in the very near future.”

“Green Light Imminent for UAV Airspace Initiative”:

“The UK CAA has for many years recognised the need for UAV regulation for predominantly civil UAVs. We are at a unique point in aviation history, and it would be helpful if our civil UAV regulation was also in register with military.”

“UAV's Deployed in Israel's Roads to Catch Violators”:

The traffic department of Israel’s police has begun operating an unmanned aerial vehicle (UAV) as part of its enforcement of traffic laws...

Ground operators spot a vehicle committing a traffic violation and then instruct the UAV to follow the vehicle. Police cars stationed in the area then stop the driver and the officers are able to immediately show the violator the infraction recorded by the UAV.

ACL Annotations

I'm surprised I have not yet linked to Chris Riesbeck's annotations on Paul Graham's ANSI Common Lisp.

This binary tree code is very inefficient. Inserting a new element, for example, copies the nodes above the point where the element is inserted. Exactly how much gets copied is left as an exercise for the reader, but such exercises should not be left for maintainers

September 20, 2005

Accelerating Change 2005

After an accident on the 5 delayed me a bit, I ended up driving straight to San Francisco to the location of the Rhythm Society celebration on Friday night. Nine thumpy, huggy hours later Eric and I then pretty much drove straight to the Stanford Campus in time to see Vernor Vinge rhapsodize about the coming day when humanity will wake up to find that the entire world has changed overnight, and that “sand now talks back to us”, and we are doomed.

Overall, Accelerating Change 2005 didn't seem all that great to me. Seeing log chart after log chart showing exponential growth in the number of bits in the world, the number of internet hosts, the speed with which brains are imaged and the number of pies eaten per day got boring, and it didn't feel like new information to me.

There were maybe four things that stuck out.

1. Ray Kurzweil's charts of exponential growth and the predictions of dramatic change within our lifetime. That is, dramatically more dramatic change than the humans who lived before us experienced—things like being able to dump the entire contents of your brain into a big hard drive and reconstitute or analyze it usefully in some way later. Supposedly we're at the inflection point for all these curves.

If this is true then we are the luckiest (unluckiest) humans to have ever lived. This would be a very big coincidence that needs some explaining.

2. Ron Kaplan, the Director of Natural Language Research at PARC, predicted that we would see conversational interfaces that operated at the level of an eight-year-old human by 2010.

I actually felt angry during this presentation because he seemed so confident and yet didn't address the important issue that I think is a big obstacle to achieving his goal, which is that no matter how many exponential curves you have in your favor, doing the representation work for this stuff is very hard--nobody really knows how to do it (and he did mention representation would be required, so he isn't planning on doing Google-style mining). And if even if you create a representation for one or two or three small domains, like making travel reservations or querying the state of some system, nobody really knows how to combine those representations in a consistent way.

(This is a more specific version of my more general gripe with the Singularity idea: It doesn't matter how fast computers get or how fast we can scan a brain, we just don't know how to turn that into something that acts intelligently enough to take over the world and replace us.)

Or maybe this representation stuff is considered an almost-solved problem and I just don't know it. I know some people are certainly making some progress on it, at least.

None of the people in the conversational interfaces presentation even tried to address anything other than simple question answering systems, either. I want software that I can give instructions to and that can take action on its own.

And really, can you imagine trying to make a flight reservation with an eight-year-old at the other end of the phone line?

3. Rudy Rucker referred to the “millenial fever of the Singularitarians”, which cracked me up. I love that guy.

I forgot what my original #4 was going to be, so here's 4A and 4B:

4A. Telling Eric my new Lisp idea, which I considered borderline-crackpot-level, and him crying “That's my idea!”

4B. Running into an Austrian Lisper at the conference (Manuel, what was your last name again?), who 1. recognized me, 2. pointed me at some ideas relevant to my crackpot idea from 4A and 3. asked if I had heard of a company called I/NET that's been doing some really neat things. Heard of it? Yeah, I was in the shit.

I just remembered what #4 was going to be: David Smith talked about Croquet, the Smalltalk-based 3D collaborative environment. It was pretty bad. I kept thinking that if they embedded Smalltalk into the Quake engine they would have started at a point much further than they've gotten after however long they've been working on it. Smith showed off features that online games have had for years, and he seemed to think they were really neat. The following presentation on Second Life must have been absolutely humiliating for the Croquet people (if it wasn't, it should have been). The Second Lifers showed off almost exactly the same things Croquet does, only it looked way better and had a nicer interface, and they have 50,000 users. pwned.

September 17, 2005

Lisp Metadata Importer 0.0.2

I got one hour of sleep last night, but I still found time today to put together a package containing the Spotlight plugin I wrote for indexing Lisp files: Lisp Metadata Importer 0.0.2.dmg.

What does it do?

This importer plugin indexes files with .lisp, .lsp and .cl extensions using the Spotlight search engine that was introduced by Apple in OS X 10.4.

You might notice that even before you install this plugin that some of your Lisp files have already been indexed by Spotlight. It is possible that something on your system set the type of some files as “TEXT”, in which case the default Spotlight text indexer will process the files. This only happens on a file-by-file basis, though, whereas the Lisp Metadata Importer instructs the system to index all files with the extensions listed above, regardless of whether or not the system already thinks they’re text files. The Lisp importer also does some Lisp-specific indexing that you might find more useful than the default text indexing.

How do I install it?

Copy the Lisp Metadata Importer.mdimporter file into the /Library/Spotlight folder.

How do I uninstall it?

Remove the Lisp Metadata Importer.mdimporter file from the /Library/Spotlight folder.

How do I test it once it is installed?

Try indexing a single Lisp file using the mdimport command. When you run mdimport with the -d1 flag it will tell you which plugin it’s using, if any, to index the file. You should see a reference to the Lisp Metadata Importer.mdimporter file.

lem:~ $ mdimport -d1 variables.lisp 2005-09-15 12:05:55.493 mdimport[6962] Import '/Users/wiseman/variables.lisp' type 'com.lemonodor.lisp-source' using 'file://localhost/Library/Spotlight/Lisp%20Metadata%20Importer.mdimporter/'

Once you’ve run mdimport, use the mdls command to look at the metadata associated with the file. The important things to look for are that the kMDItemContentType is “com.lemonodor.lisp-source”, and that there are some attributes with names that begin with “org_lisp”, like “org_lisp_defmacros” or “org_lisp_defuns”.

lem:~ $ mdls variables.lisp

variables.lisp -------------

kMDItemAttributeChangeDate = 2005-09-08 11:30:18 -0700

kMDItemContentCreationDate = 2005-09-02 17:41:07 -0700

kMDItemContentModificationDate = 2005-09-02 17:41:08 -0700

kMDItemContentType = "com.lemonodor.lisp-source"

kMDItemContentTypeTree = (

"com.lemonodor.lisp-source",

"public.source-code",

"public.plain-text",

"public.text",

"public.data",

"public.item",

"public.content"

)

kMDItemDisplayName = "variables.lisp"

# and on and on, until finally...

org_lisp_defclasses = ("i-dont-think-so")

org_lisp_defgenerics = (attack, "(setf mood)", "(setf mood)")

org_lisp_definitions = (

foo,

"(setf foo)",

"oh-noe",

"*oh-no*",

"*hee-ho*",

"+thing+",

"i-dont-think-so",

"BRAIN-CELL",

"(RAT-BRAIN-CELL",

attack,

attack,

"(setf mood)",

"(setf mood)"

)

org_lisp_defmacros = ("oh-noe")

org_lisp_defmethods = (attack, "(setf mood)")

org_lisp_defstructs = ("BRAIN-CELL", "RAT-BRAIN-CELL")

org_lisp_defuns = (foo, "(setf foo)")

org_lisp_defvars = ("*oh-no*", "*hee-ho*", "+thing+")

If you see those attributes then the importer is working correctly. If the importer doesn’t seem to be working and you’ve double checked to make sure you copied it to the correct folder, try the mdimport –r trick in the next question & answer; it’s the equivalent of kicking a malfunctioning jukebox.

How do I index all my Lisp files?

Use mdimport again, this time with the -r flag, and passing it the path to the Lisp plugin.

lem:~ $ mdimport -r /Library/Spotlight/Lisp\ Metadata\ Importer.mdimporter/

2005-09-15 12:41:38.650 mdimport[7169] Asking server to reimport files with UTIs: (

"dyn.ah62d4rv4ge8024pxsa",

"com.lemonodor.lisp-source",

"dyn.ah62d4rv4gq81k3p2su11uppqrf31appxr741e25f",

"dyn.ah62d4rv4ge80265u",

"dyn.ah62d4rv4ge80g5a"

)

What exactly is being indexed?

The Lisp metadata importer indexes the definitions contained in a file. This includes functions, macros, classes, methods, generic functions, structures, defvars, defparameters and defconstants. It also includes any object FOO defined by a form that looks like (“defsomething FOO ...)”. In addition to definitions, the entire contents of the file are indexed for full text queries.

How do I search for something?

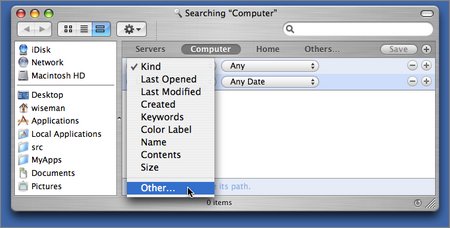

The GUI way is to hit Command-F in the finder to bring up a Find window. Click on one one of the attributes and select “Other...”. to see a list of other attributes:

Choose one of the Lisp importer’s attributes from the list that comes up (you can type “lisp” into the search field on the upper right to filter out the non-Lisp attributes):

Now enter the text you’d like to search for and watch the matching files appear:

Some people have reported that the Lisp-specific attributes weren’t available in the Find dialog until they re-launched the Finder (to re-launch the finder, hit Command-Option-Esc and then select the Finder in the “Force Quit Applications” dialog that pops up).

The non-GUI way to do Spotlight searches is to use the mdfind command. I did this the other day when someone on IRC asked how to do search-and-replace on a string. I knew I had written a function to do that, but I couldn’t remember which project the code was in.

heavymeta:~ $ mdfind "org_lisp_defuns == '*search*replace*'" /Users/wiseman/inet-projects/echo/server/xml-rpc.lisp /Users/wiseman/inet-projects/il/utilities/xml-rpc/pregexp-utils.lisp /Users/wiseman/John/src/www-link-validator/link-validator.lisp

(It turned out I had a couple implementations lying around.)

The Spotlight query language used by mdfind is documented online by Apple.

What are the attributes I can search on and where do they come from in the Lisp file?

The following metadata attributes are defined by the Lisp Metadata Importer:

| Metadata Attribute | Defining Forms |

|---|---|

| org_lisp_defuns | defun |

| org_lisp_defmacros | defmacro |

| org_lisp_defclasses | defclass |

| org_lisp_defgeneric | defgeneric |

| org_lisp_defmethod | defmethod |

| org_lisp_defstructs | defstruct |

| org_lisp_defvars | defvar, defparameter, defconstant |

| org_lisp_definitions | Anything defined with a “(def...” form. |

In addition, the importer sets the kMDItemTextContent attribute to be the entire contents of the file, so you can do full text searches.

Is the source code available?

Yes it is. Look in the Source Code folder. You’ll see that the Lisp Metadata Importer is written in Objective C and is covered under the MIT License.

What shortcuts did you take?

Here are a few I can think of:

- The importer only indexes definition forms that are at the beginning of a line.

- It has a very simple, limited concept of symbol names and Lisp reader syntax, so it can easily become confused.

- I shouldn’t really use the org_lisp prefix for attribute names.

- I should try to coordinate with the people writing plugins for Ruby, Python and other languages so we can come up with a common set of source code metadata attributes.

Who should you thank?

Justin Wight, Pierre Mai, Ralph Richard Cook and Bryan O’Connor all helped me to some extent. Thanks, guys!

September 16, 2005

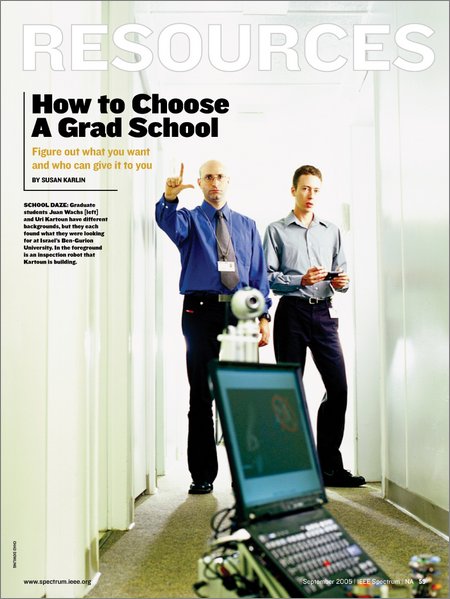

ER1 in Spectrum

Well, sort of. IEEE Spectrum's September issue has an article on choosing grad schools [PDF version] with a nice photo featuring an ER1.

“Can you hold on a minute? I need to charge my robot.”

Uri Kartoun is developing robots, nicknamed EDNex and Clango, for handling suspicious packages. Down the hall, classmate Juan Wachs is working on a computer interface that responds to hand gestures.

September 14, 2005

Dodgers Lose, We Win

Last night Lori and I hung out in some unfathomably expensive luxury suites at Dodger Stadium. All-you-can-eat Dodger Dogs and a visit by Tommy Lasorda.

It was OK, but I kept thinking “If only Andy were here!” (Andy took me to my first baseball game, back in Chicago.)

September 13, 2005

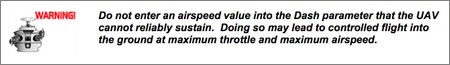

Controlled Flight Into the Ground

From the AP50 AutoPilot Flight Control System Manual (pdf), an awesome failure mode:

Actually this thing is a pretty cool looking, low-cost auto pilot.

Y-Combinator in Wired

“Stars Rise at Startup Summer Camp”:

Graham, a startup evangelist who sold his company to Yahoo in 1998, came up with the idea of paying students to program instead of working a summer job after giving a talk about startups to Harvard University computer science undergraduates.

He advised them to get their funding from angel investors who got rich in technology themselves.

“Then I said jokingly, but not entirely jokingly, ‘But not from me,’ and everyone's faces fell,” Graham said. “Afterwards, I had dinner with some of these guys and they seemed amazingly competent and I thought, ‘You know, these guys probably could start companies.’”

Soon after, Graham teamed up with an investment banker and two hackers from his old company, and the group announced the Summer Founders Program in late March.

Though hopefuls had only 10 days to respond, some 227 teams applied.

I don't think I knew that Aaron Swartz was doing Infogami.

September 12, 2005

Accelerating Change

This weekend I'll be at the Accelerating Change conference at Stanford.

Vernor Vinge, Ray Kurzweil, Peter Norvig, Rudy Rucker, Terry Winograd, and more. Hell yeah.

Diff-Sexp

Michael Weber: “A #lisp conversation sparked the cunning idea to write a diff-like algorithm for s-expressions.”

The algorithms is based on Levenshtein-like edit distance calculations. It tries to minimize the number of atoms in the result tree (also counting the edit conditionals |#-new|, |#+new|). This can be observed in the example below: instead of blindly adding more edit conditionals, the algorithm backs up and replaces the whole subexpression.

DIFF-SEXP> (diff-sexp '(DEFUN F (X) (+ (* X 2) 4 1)) '(DEFUN F (X) (- (* X 2) 5 3 1))) ((DEFUN F (X) (|#-new| + |#+new| - (* X 2) |#-new| 4 |#+new| 5 |#+new| 3 1))) DIFF-SEXP> (diff-sexp '(DEFUN F (X) (+ (* X 2) 4 4 1)) '(DEFUN F (X) (- (* X 2) 5 5 3 1))) ((DEFUN F (X) |#-new| (+ (* X 2) 4 4 1) |#+new| (- (* X 2) 5 5 3 1)))

September 09, 2005

Trivial Lisp Stats

That parrot wasn't just eye candy.

On one machine I have 14,347 files with names that end in “.lisp”. Of those, 3,664 contain data that is not valid UTF-8.

September 08, 2005

UAVs over Louisiana

The 10 Evolution mini-UAVs flying out of the New Orleans Naval Air Station and “[assessing] damage to oil and gas distribution, dikes, berms and other aspects of the region's infrastructure” are apparently part of the biggest civilian UAV deployment ever.

I think it's pretty clear that lack of high tech wasn't the biggest problem faced by the people of New Orleans when Katrina hit, and it's not the biggest problem faced by the survivors. I'm not sure what can be done to make things right, but this might be a start:

September 06, 2005

Morning Improv RSS Feed

I'm generating an RSS feed for Scott McCloud's Morning Improv blog.

I've got Lisp code scraping his HTML to create the feed, just like I do for the DARPA Grand Challenge feed, so there are some limitations. In particular, again there are no permalinks, but I'm hoping to talk to Scott and get that fixed. Or at least semi-fixed.

Aaron: Scott has officially left the no-RSS ghettto.

The feed is already aggregated by LiveJournal, if you're into that.

September 04, 2005

Want To Learn Lisp

Battleship Arkansas Being Tossed in Giant Pillar, Grant Powers, Watercolor, 1946

80 86 people on 43 Things want to learn Lisp.

21 already have—of those, four have said it wasn't worth doing.

September 02, 2005

Lisp Metadata Importer I

I now have a pretty functional Spotlight indexer for Lisp files based on Jonathan Wight's Python indexer.

The heart of Jonathan's plugin is actually implemented in Python, and uses the parser module to parse the Python code being indexed and grovel through its abstract syntax trees. I began by fiddling with the Python code. You might expect that I would have started by trying to embed some Lisp environment into the plugin, but you would be wrong; That's too much pain even for me and offers no clear benefit I could see. Coding in Python let me play around with parsing strategies more easily than going with straight Objective C would have.

The performance of a Python-based plugin is, however, atrocious. The first version of my code, which just matched lines against a (precompiled) regular expression, was able to index at the rate of about 1.4 files/second (302 lines/second). A rough estimate of the time it would take to index all the Lisp files on my Powerbook is an hour and a half. Unacceptable.

So I rewrote it in Objective C. The resulting code runs about 16x faster than the Python version, even though it does more. It should be able to index every Lisp file I have on this machine in five minutes.

Here's an example of the plugin in action. First, a test file, test.lisp:

(defun foo () 1 2 3) ;; Not handled very well yet. (defun (setf foo) (whatever)) (defmacro oh-noe () (beep)) (defvar *oh-no* 1) (defparameter *hee-ho* T) (defconstant +thing+ :what-now) (defclass i-dont-think-so () ()) (defstruct BRAIN-CELL owner alcohol-level) (defstruct (RAT-BRAIN-CELL (:conc-name NIL)) color) ;; Haven't done these yet. (defgeneric attack (self target)) (defmethod attack ((self giant-robot) (target puny-human)) (squish self target))

And here's the metadata that results from indexing it:

lem-airport:~/src/cm wiseman$ mdls test.lisp

test.lisp -------------

kMDItemAttributeChangeDate = 2005-09-02 13:34:24 -0700

kMDItemContentCreationDate = 2005-09-02 12:47:58 -0700

kMDItemContentModificationDate = 2005-09-02 12:51:07 -0700

[etc. Here's the good part:]

org_lisp_defclasses = ("i-dont-think-so")

org_lisp_definitions = (

foo,

"(setf",

"oh-noe",

"*oh-no*",

"*hee-ho*",

"+thing+",

"i-dont-think-so",

"BRAIN-CELL",

"(RAT-BRAIN-CELL",

attack,

attack

)

org_lisp_defmacros = ("oh-noe")

org_lisp_defstructs = ("BRAIN-CELL", "RAT-BRAIN-CELL")

org_lisp_defuns = (foo, "(setf")

org_lisp_defvars = ("*oh-no*", "*hee-ho*", "+thing+")

I decided to use nothing fancier than regular expressions to parse files, and you can see they need some tweaking.

NSString *LispDef_pat = @"(?i)^\\(def[^\\s]*[\\s\\']+([^\\s\\)]+)"; NSString *LispDefun_pat = @"(?i)^\\(defun\\s+([^\\s\\)]+)"; NSString *LispDefmacro_pat = @"(?i)^\\(defmacro\\s+([^\\s\\)]+)"; NSString *LispDefclass_pat = @"(?i)^\\(defclass\\s+([^\\s\\)]+)"; NSString *LispDefstruct_pat = @"(?i)^\\(defstruct\\s+\\(?([^\\s\\)]+)"; NSString *LispDefvar_pat = @"(?i)^\\((?:defvar|defparameter|defconstant)\\s+([^\\s\\)]+)";

Do those look reasonable? Is there anything I'm missing? (First person to give me a regex that correctly parses symbols escaped with the various methods allowed in Common Lisp gets... my pity.)

There's really nothing very lisp-specific about this plugin. It wouldn't be that hard to extend it to become some sort of universal regex-based Spotlight indexer for other text-based formats, though you'd have to do some fancy on-the-fly modification of the schema describing the file types for which it should be used.

I'd like to extend it to record defgeneric and defmethod forms, and then some simple who-calls functionality. Then you'll get the code and we'll see if this sort of indexing is actually useful to anyone.

Update: The importer is complete, see this post for details and availability.